Our scenario is most likely to be fully unsupervised – i.e. Unsupervised anomaly detectionīefore we discuss the implementation, we should briefly consider the second of the two papers mentioned. We can train 4 models to each predict one feature and then combine their accuracy scores to give a measure of anomaly. So for this example we have a limited number of features – only 4 – but with 2 very different distributions. That of vibration data is, as expected, fairly similar with one strong synchronized peak, together with a second one that varies across vibration dimension. I’ve plotted the distributions of our four values in the following graphs. This is called the surprisal factor, which we will use to weight the predictions from each classifier. This will be positive number ranging from a low value (interpretation: the model was not a very good predictor, so neither really bad nor really good predictions should say much about its overall correctness) to a high one (interpretation: we expect a consistently high level of accuracy). In the paper, the authors use the error/accuracy (in their case, from the confusion matrix in our case, that will be something like the mean absolute error), or, more specifically, -log(err). Having established the general approach, the important question is, how should we weight/combine the individual assessments from each predictor. We also need to understand the distribution of the relationships between the individual features, so we can recognize those relationships, and identify situations that no longer preserves those relationships. Putting it another way: it may not be sufficient to simply examine how individual features are distributed.

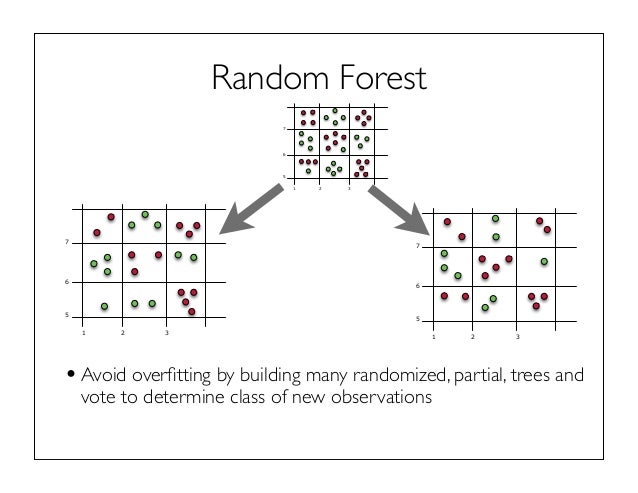

If we construct a special tandem bike that can take 4 riders, this will be doubly true! Or imagine a spider’s web: we preserve the shape of the web if we tug on all of the outer nodes of the web in the same way at the same time, but if we just pull on two of them, a distortion of the shape becomes evident. And secondly: “predictors that are accurate on the training set will continue to be accurate when predicting the feature values of examples that come from the same distribution as the training set, and they will make more mistakes when predicting the feature values of examples that come from a different distribution.”Īs an analogy, imagine two people are out on a tandem bicycle: they can vary their speed, direction and cadence, but whatever they do has to be done in sync with one another! They have degrees of freedom, but only as a unit.Repeating this for each feature we build an ensemble of predictors – in doing so we have actually trained them to recognise the “shape” inherent in the data as a whole. In other words, a model uses the other features to predict the target feature. Firstly, that “the normal data may not have discernible positions in feature space, but do have consistent relationships among some features that fail to appear in the anomalous examples”.At the heart of the approach outlined in the two papers are these two intuitions. Specifically, temperature and vibration readings in the x, y and z dimensions. We’ll be using data from a Balluff Condition Monitoring sensor. unsupervised training:- data that is not labelled.semi-supervised training :- data that is partially labelled.supervised training :- data that is clearly labelled (with a target value or as belonging to a specific class/category).Just to make this distinction transparent: Instead we’ll look at how we can implement this with machine data. We won’t spend too long on the underlying theory (the two links provide all the background). In this article I am going to discuss an approach based on two papers. non-anomalous) and we use a model to analyze it for patterns and trends: we can then use this model with new, unseen data, to identify and flag anything that does not fit the expectations. In those scenarios we assume that nearly all of our training data is “’good” (i.e. Unsupervised anomaly detection with unlabeled data – is it possible to detect outliers when all we have is a set of uncommented, context-free signals? The short answer is, yes – this is the essence of how one deals with network intrusion, fraud, and other types of low-instance anomaly.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed